In February, Alipay announced that its AI Pay solution had processed more than 120 million transactions in a single week, a milestone. The number wasn’t a projection or a roadmap slide. It was an operational fact, announced via BusinessWire and covered by Morningstar, FinTech Magazine, and CoinDesk, among others.

That figure captures the velocity of what’s happening in agentic commerce right now. Stripe launched its Agentic Commerce Suite in December 2025, introducing Shared Payment Tokens that let AI agents initiate purchases using a buyer’s credentials without exposing the underlying card data. Visa and Mastercard have both published trusted agent frameworks and agent token protocols. MoonPay, in late February 2026, shipped a non-custodial infrastructure layer that gives AI agents autonomous access to crypto wallets and fiat on-ramps. And those are just the moves from the past few months.

The infrastructure race is real. What hasn’t kept pace is the trust layer.

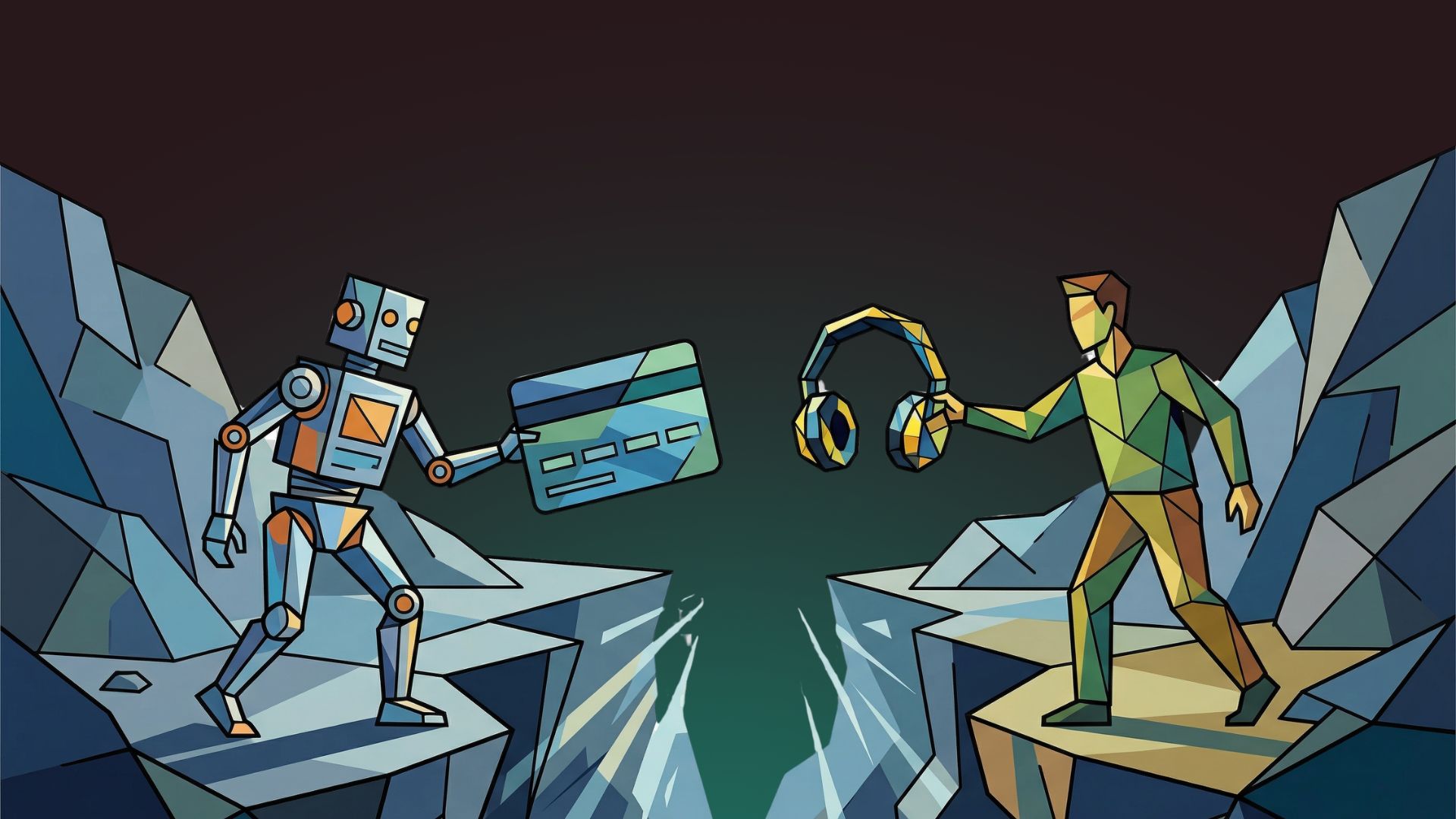

The Authorization Problem

Today, most approaches to AI agent payments rely on a familiar pattern: a user authorizes an agent once, grants it access to a payment credential, and the agent transacts from there. Think of it as handing someone your credit card and saying “buy whatever you think I need.” For a single purchase, that’s manageable. For an agent making dozens or hundreds of autonomous decisions per day across multiple merchants, the model breaks down fast.

The risks aren’t hypothetical. Experian’s 2026 Future of Fraud Forecast, covered by Fortune in January, named “machine-to-machine mayhem” as the top threat to companies this year, a scenario in which bad-actor bots camouflage themselves among legitimate AI shopping agents. Kathleen Peters, Experian’s chief innovation officer for fraud and identity, warned that distinguishing between good and bad bots is no longer a simple binary. A law firm analysis from Sheppard Mullin published in early 2026 flagged the likelihood of rising consumer disputes not from stolen credentials but from AI agents that simply misunderstood a user’s intent and made the wrong purchase. Davis Wright Tremaine raised a pointed question in a legal analysis: how does a merchant prove proper authorization when the AI agent, not the cardholder, initiated the transaction?

The regulatory apparatus is catching up, but slowly. The CFPB sought comment in August 2025 on who qualifies as a consumer “representative.” NIST plans to host a public-private conversation about AI agent standards in April 2026. The Electronic Transactions Association testified before the House Financial Services Committee in January 2026 that existing principles around authorization, consent, and liability should apply — but acknowledged those principles need adaptation for agent-initiated commerce.

Meanwhile, the transaction volumes keep climbing.

Why Session-Level Auth Isn’t Enough

The fundamental gap in most current systems is granularity. A session-level authorization says: “This agent is allowed to act on behalf of this user.” It doesn’t say: “This agent is allowed to buy headphones under $200 from an authorized electronics retailer, but not subscribe to a streaming service or purchase gift cards.” Without that granularity, the attack surface expands with every new capability an agent gains.

Stripe’s Shared Payment Tokens represent one thoughtful approach: tokens can be scoped to a specific business, limited by time or amount, and revoked at any time. Visa’s Trusted Agent Framework and Mastercard’s Agent Pay protocol are tackling the merchant-side question of how to verify that an incoming agent is legitimate. These are meaningful steps.

But they primarily address the payment credential layer. They answer “can this agent pay?” What’s less addressed — and arguably more important — is the identity and intent layer: “Who is this agent? Who authorized it? What, specifically, is it allowed to do right now?”

What We Built, and Why

That gap is why we created KYAPay at Skyfire. The protocol is designed as an identity-linked payment standard for agent-to-service and agent-to-agent transactions. At its core, KYAPay uses signed JSON Web Tokens to carry verified information about the agent’s owner, the scope of its authorization, and the parameters of any given transaction.

The design principle is that authentication shouldn’t happen once per session. It should happen at the transaction level. When an agent attempts a purchase, the protocol can verify whether the agent is authorized, whether the transaction type falls within its permissions, and whether the user has approved that category of spending, all before money moves.

We demonstrated this in December 2025 in a controlled environment with Visa’s Intelligent Commerce suite, where an AI agent autonomously researched and purchased a product on Bose.com using the KYAPay protocol and Visa’s Trusted Agent Protocol. The protocol verified the agent’s identity and authorization to both the consumer and the merchant before the transaction cleared.

KYAPay is an open protocol, the specification is publicly available, and the reference implementation is open source. We designed it to be compatible with OAuth2 and OIDC, so businesses don’t need to rebuild their authentication stacks. Our goal isn’t to replace existing payment rails. It’s to add a verification layer that those rails currently lack.

The Pattern We’ve Seen Before

Anyone who worked in digital payments in the early 2000s recognizes this trajectory. Rapid adoption comes first. Then come the fraud vectors and consumer trust problems. Then comes the security infrastructure that should have been there from the start. The difference now is that AI agents operate at machine speed. A fraudulent agent, or even a well-intentioned one that makes a mistake, can execute hundreds of bad transactions before a human notices.

Experian called 2026 a “tipping point” for AI-enabled fraud. The Center for Data Innovation published a policy brief just this week arguing that regulations written for human-initiated transactions need updating for the agentic era. ConsenSys, in a recent NIST submission, urged policymakers to prioritize open, interoperable trust frameworks built collaboratively rather than proprietary systems controlled by a handful of incumbents.

The companies that solve identity verification and trust at the protocol level will shape how this economy functions. The ones that bolt it on after the fact will spend their time managing disputes, chargebacks, and eroded consumer confidence.

The question facing the industry isn’t whether AI agents will transact autonomously. They already are, at scale. The question is whether consumers will trust them to do it, and whether the infrastructure exists to justify that trust.